In this article, we do a Vast.ai review, looking at the cost, features, and reliability of the service, and we compare it to other better known services, such as Google Colab and Amazon’s Sagemaker.

Table of Contents

- What is Vast.ai?

- Vast.ai review

- Vast.ai vs Amazon SageMaker

- Vast.ai vs Google Cloud

- Vast.ai vs Google Colab

- Key points

What is Vast.ai?

Vast.ai is a service that allows users to rent out their GPUs for heavy duty deep learning or mining. They have a wide range of GPUs available from all over the world. You can rent either full on-demand instances, or interruptible instances, which are cheaper but can be interrupted at any time.

Vast.ai allows access via either SSH or a Jupyter notebook. Once you sign up, you can select from a variety of different GPU configurations. Some of the options are RTX 3090s, 4090s, A6000s, and A100s. There are also plenty of multiple-GPU configurations available.

Vast.ai review

Ease of setup

While we generally found Vast.ai to be great, ease of setup and use isn’t its strong point. The site design is somewhat amateurish, and doesn’t give off the sense of a large, reliable business.

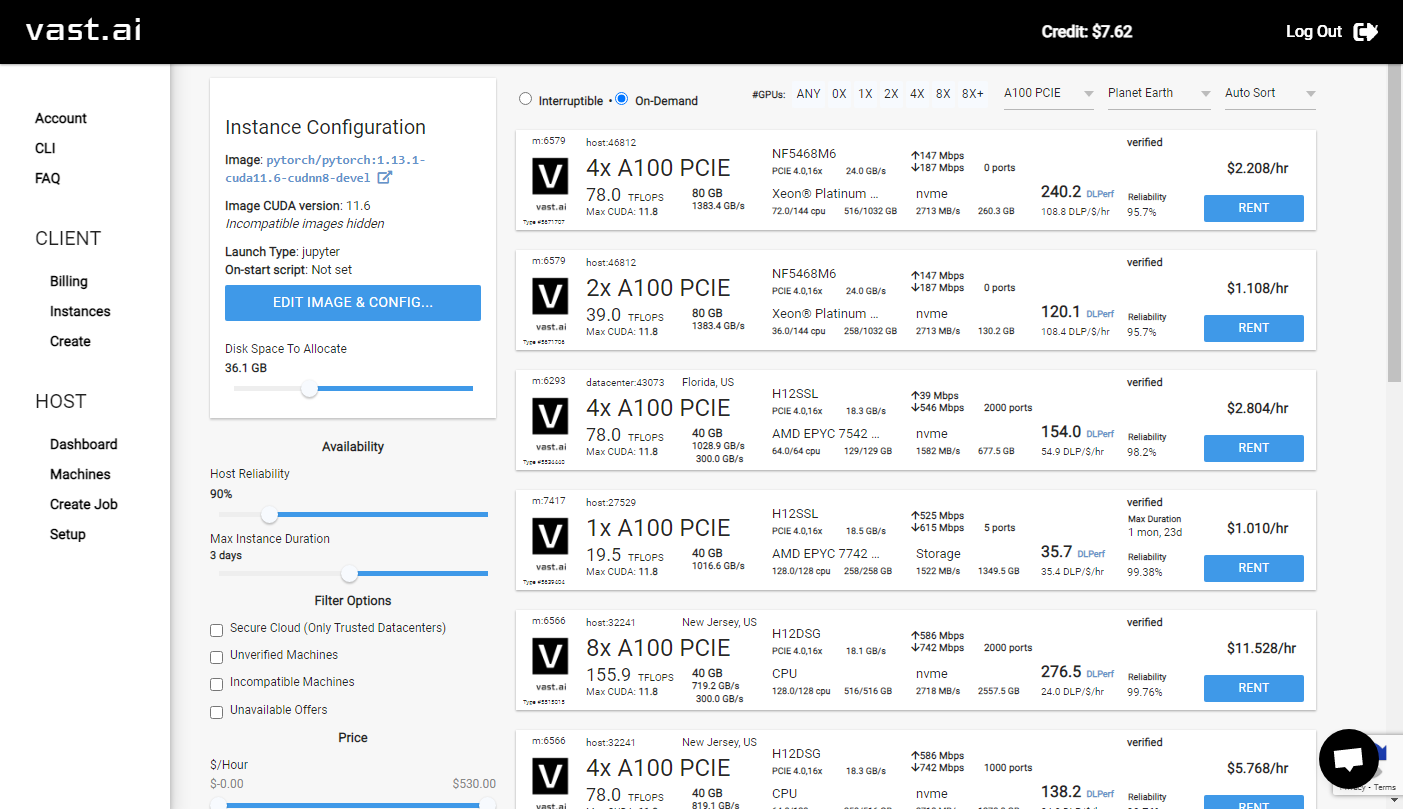

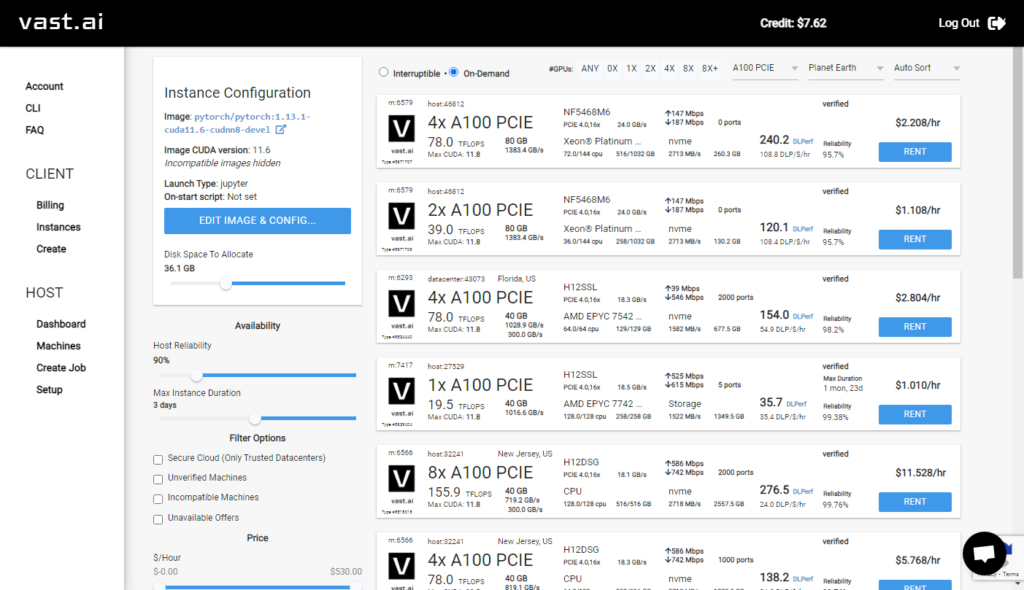

Signing up is relatively easy. You don’t need to put in much information to get to the point where you can view available instances. After you have signed up, you will see a dashboard like this:

On the left, there is an admin menu, where you can either rent an instance or make an instance available for rent. On the right, you can see a list of available instances.

Vast.ai makes it easy to find the right instance type. You can sort or search by GPU, processor, and much more. For our test work, we found the A6000 GPUs to be the best value. If you’re running code that’s multi-GPU friendly, you can find some great higher-end options as well.

You receive some free credit for signing up, so you don’t actually need to deposit any money in order to start an instance. However, this causes some confusion, since the credit is not enough to actually complete the instance setup.

In our test, we got to the point of uploading data. As we uploaded, the instance suddenly became unresponsive and started showing errors. It took us some time to realize that we had run out of trial credit and our instance was shut down.

Usability

Once we realized what was going on, getting everything running was simple. We deposited some money using a credit card, and we were able to use the service without a problem. The Jupyter notebook interface is intuitive and familiar. And everything ran relatively smoothly.

One thing we didn’t like was that instances seem to take a long time to provision. Although we didn’t time the instances, they felt like they took longer than Amazon Sagemaker, and significantly longer than Google Colab to get running.

Turning off instances also seemed to take longer than comparable services. However, considering the price difference between Vast.ai and other services, the additional time was worth it.

Cost effectiveness

Cost effectiveness is where Vast.ai really shines. For comparison, we purchased an RTX A6000 48 GB GPU. The current street price of the GPU is around $4000, even though it’s no longer cutting edge.

The cost to rent this GPU without interruption is around $0.60 per hour. There are additional costs for disk space and I/O, so the all-in cost is around $0.75 per hour. That means we would have to use the GPU we purchased 24/7 for over 200 days to recoup the cost.

But the differences are even more stark with Sagemaker. Monthly ML storage is almost 1000x more expensive than the pricing we got from Vast.ai. Google Colab is a bit different, because you don’t get actual costs per GPU, but we will look into that more in a later section.

Performance

We tested a Vast.ai A6000 GPU against a local A6000 GPU with similar specs. Our test process was a large deep learning training task, which we benchmarked at 8 hours on the local GPU.

The Vast.ai instance performed very similarly to the local GPU. The time to complete the task was within a few minutes of the local GPU. Vast.ai doesn’t share GPUs, so you are getting 100% of the GPU for any time you’re being billed for.

You can expect to get a similar performance level with a Vast.ai GPU that you can get with a local GPU. We were very happy with the performance level we experienced with Vast.ai.

Vast.ai vs Amazon SageMaker

Amazon SageMaker is ridiculously expensive for what you get, and the difference is stark when compared to Vast.ai.

In the chart below, we compared the cost of a similar instance on SageMaker and Vast.ai. From our Vast.ai review, we found that for a similar GPU, Amazon’s price is nearly 10x more than Vast.ai. And for storage, the price difference is several hundred times higher.

| Vast.ai | Amazon SageMaker | |

| $/hour for 1 GB storage | $0.0002 | $0.14 |

| $/hour for 1x V100 GPU | $0.42 | $3.06 |

The differences don’t end there, however. With SageMaker, setting up a deep learning environment can take hours, with many difference steps across multiple AWS products and services. Billing is also extremely complicated with SageMaker, so it’s never clear how much you will pay for a service.

The problems with SageMaker billing are so bad that it’s easy to end up with huge surprise bills at the end of a month. Even when SageMaker is shut off, the underlying services may continue to bill.

Vast.ai and SageMaker offer similar GPU options, but SageMaker is far more expensive than Vast.ai. In our opinion, there is absolutely no reason to pick Amazon SageMaker over Vast.ai.

Vast.ai vs Google Cloud

Google Cloud (which is different from Google Colab, below), is priced slightly better than SageMaker. Google doesn’t have a direct comparison between its TPUs and comparable GPUs/TPUs from Nvidia, so we can’t do an exact comparison like above.

However, from our tests, we found that a Google TPU 4, which performed similarly to a A100 GPU. Vast.ai has single A100 instances for as little as $1.00 per hour. Google charges $3.22/hour for a TPU v4. So you get a huge savings using Vast.ai.

Vast.ai vs Google Colab

The difference between Google Colab and Vast.ai is a bit less stark. Google Colab offers a generous amount of free compute time. But the amount you receive depends on a lot of different factors, and it’s secret.

Furthermore, Google sells compute units for about $0.10 each. But the GPU equivalent of a compute unit varies based on a number of factors. From our Vast.ai review, we found that the standard GPU available on Colab ran our deep learning training task at about 1/4 the speed of the A6000 on Vast.ai.

Buying Google Colab’s premium units, we found that pricing for an A100 equivalent for an hour was about $1.60

| Vast.ai | Google Colab Premium | |

| $/hour for 1 GB storage | $0.0002 | included |

| $/hour for 1x A100 GPU | $1.06 | $1.60 |

Again, here, Vast.ai is cheaper if you’re looking for a higher end option. Of course, if you only need the power of the free tier, Google’s free Colab option can’t be beat.

Key points

In our Vast.ai review, we found that Vast.ai is a great option for anyone looking to perform deep learning tasks in the cloud.

- Vast.ai is significantly cheaper than Amazon Sagemaker, and is easier to use

- Vast.ai is somewhat cheaper than Google Colab’s paid version, and is significantly faster than Google Colab’s free version